Kartic Subr

karticsubr@cs.ucl.ac.uk

Humans, do NOT type the "ic" to email

Newton Fellow

Dept of Computer Science

University College London

Malet Place

London WC1E 6BT, UK

Ph: +44 20 7679 3876

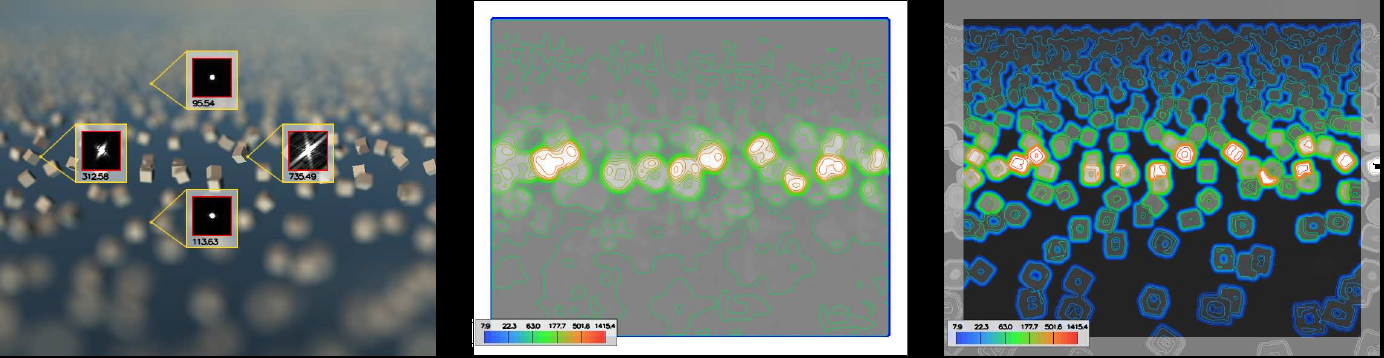

Real-time Rough Refraction

Charles De Rousiers, Adrien Bousseau, Kartic Subr, Nicolas Holzschuch, Ravi Ramamoorthi

To appear, I3D 2011

Preprint (pdf)

Video (on YouTube)

We present an algorithm to render objects of transparent materials with rough surfaces in real-time, under distant illumination. Rough surfaces cause wide scattering as light enters and exits objects, which significantly complicates the rendering of such materials. We present two contributions to approximate the successive scattering events at interfaces, due to rough refraction : First, an approximation of the Bidirectional Transmittance Distribution Function (BTDF), using spherical Gaussians, suitable for real-time estimation of environment lighting using pre-convolution; second, a combination of cone tracing and macro-geometry filtering to efficiently integrate the scattered rays at the exiting interface of the object. We demonstrate the quality of our approximation by comparison against stochastic raytracing.

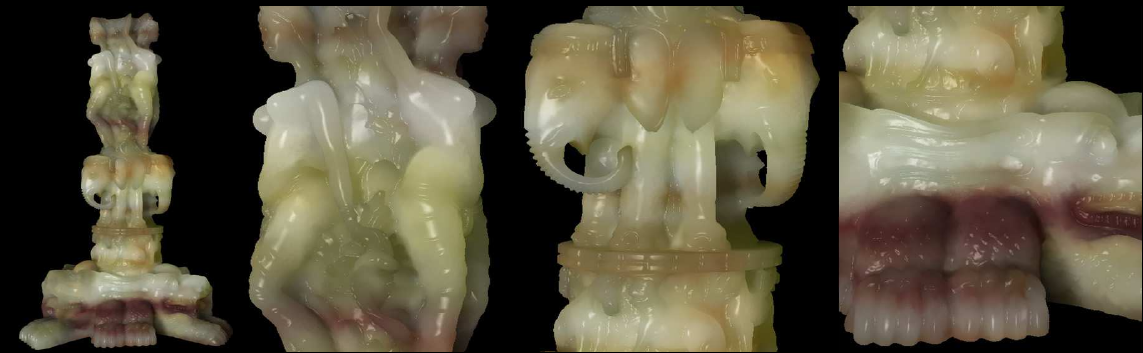

Real-time Rendering of Heterogeneous Translucent Objects with Arbitrary Shapes

Yajun Wang, Jiaping Wang, Nicolas Holzschuch, Kartic Subr, Baining Guo

To appear, Computer Graphics forum 2010, (Proceedings of Eurographics 2010)

Preprint (pdf)

In this paper, we present a real-time algorithm for rendering translucent objects of arbitrary shapes. We approxi mate the light scattering process inside the object with the diffusion equation, which we solve on-the-fly using the GPU. Our algorithm is general enough to handle arbitrary geometry, heterogeneous materials, deformable objects and modifications of lighting, all in real-time. Our algorithm works as follows: in a pre-processing step, we discretize the object into a regular 4-connected structure (QuadGraph). Thanks to its regular connectivity, this structure is easily packed into a texture and stored on the GPU. At runtime, we use the QuadGraph stored on the GPU to solve the diffusion equation, in real-time, taking into account the varying input conditions: the incoming light and the object material and geometry. We handle deformable objects, as long as the deformation does not change the topological structure of the objects.

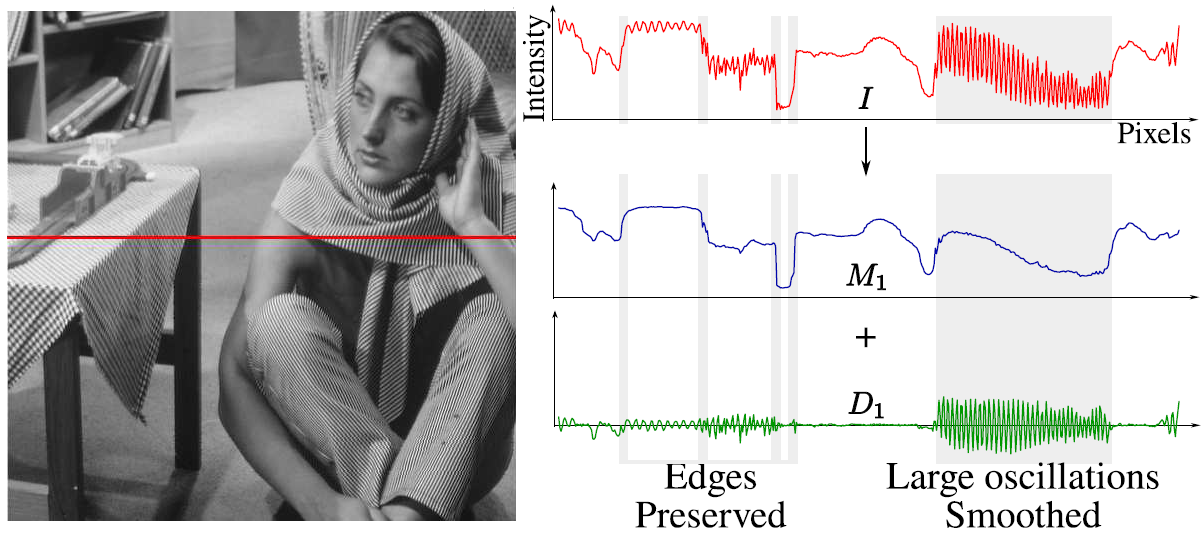

Edge-preserving Multiscale Image Decompostion based on Local Extrema

Kartic Subr, Cyril Soler, Fredo Durand

To appear, Transactions on Graphics 2009 (SIGGRAPH Asia)

Preprint (pdf) . . . . . . . . . Supplementary material (pdf)

Video (.mov ~46 MB) . . . Source Code (.zip)

Slides (ppt)

We propose a new model for detail that inherently captures oscillations, a key property that distinguishes textures from individual edges. Inspired by techniques in empirical data analysis and morphological image analysis, we use the local extrema of the input image to extract information about oscillations: We define detail as oscillations between local minima and maxima. Building on the key observation that the spatial scale of oscillations are characterized by the density of local extrema, we develop an algorithm for decomposing images into multiple scales of superposed oscillations. Current edge-preserving image decompositions assume image detail to be low contrast variation. Consequently they apply filters that extract features with increasing contrast as successive layers of detail. As a result, they are unable to distinguish between high-contrast, fine-scale features and edges of similar contrast that are to be preserved.We compare our results with existing edge-preserving image decomposition algorithms and demonstrate exciting applications that are made possible by our new notion of detail.

Fourier Depth of Field

Cyril Soler, Kartic Subr, Fredo Durand, Nicolas Holzschuch, Francois Sillion

ACM Transactions on Graphics, 2009

Paper (pdf)

Slides (ppt)

Optical systems used in photography and cinema produce depth of field effects, that is, variations of focus with depth. These effectsFG can be simulated in image synthesis by integrating incoming radiance at each pixel over a finite aperture: Aperture integration can be extremely costly for defocused areas with high variance in the incoming radiance, since many samples are required in a Monte Carlo integration. However, using many aperture samples is wasteful in focused areas where the integrand varies very little. Similarly, image sampling in defocused areas should be adapted to the very smooth appearance variations due to blurring. This paper introduces an analysis of focusing and depth of field in the frequency domain, allowing a practical characterization of a light field's frequency content both for image and aperture sampling. Based on this analysis we propose an adaptive depth of field rendering algorithm which optimizes sampling in two important ways. First, image sampling is based on conservative bandwidth prediction and a splatting reconstruction technique ensures correct image reconstruction. Second, at each pixel the variance in the radiance over the aperture is estimated, and used to govern sampling. This technique is easily integrated in any sampling-based renderer, and vastly improves performance.

Sampling Strategies for Efficient Monte Carlo Image Synthesis

Kartic Subr

Ph.D. Dissertation, May 2008

Adivsor: James Arvo

Full dissertation (pdf)

Introduction only (pdf)

Slides (pdf)

The gargantuan computational problem of light transport in physically based image synthesis is popularly made tractable by reduction to a series of sampling problems. This reduction is a consequence of using Monte Carlo integration at various stages of the transport process. In this document we describe analytic and computational tools for efficient sampling, and apply them at three stages of the light transport process: Sampling the image, sampling the camera aperture and sampling direct illumination due to distant light sources. We also adapt a standard statistical technique of inductive inference to assess different Monte Carlo sampling strategies that solve the light transport problem.

Statistical Hypothesis Testing for Assessing Monte Carlo Estimators: Applications to Image Synthesis

Kartic Subr, James Arvo

ACM Pacific Graphics, 2007

Paper (pdf)

Slides (pdf)

Image synthesis algorithms are commonly compared on the basis of running times and/or perceived quality of the generated images. In the case of Monte Carlo techniques, assessment often entails a qualitative impression of conver- gence toward a reference standard and severity of visible noise; these amount to subjective assessments of the mean and variance of the estimators, respectively. In this paper we argue that such assessments should be augmented by well-known statistical hypothesis testing methods. In par- ticular, we show how to perform a number of such tests to assess random variables that commonly arise in image syn- thesis such as those estimating irradiance, radiance, pixel color, etc. We explore five broad categories of tests: 1) de- termining whether the mean is equal to a reference stan- dard, such as an analytical value, 2) determining that the variance is bounded by a given constant, 3) comparing the means of two different random variables, 4) comparing the variances of two different random variables, and 5) verify- ing that two random variables stem from the same parent distribution. The level of significance of these tests can be controlled by a parameter. We demonstrate that these tests can be used for objective evaluation of Monte Carlo estima- tors to support claims of zero or small bias and to provide quantitative assessments of variance reduction techniques. We also show how these tests can be used to detect errors in sampling or in computing the density of an importance function in MC integrations.

Steerable Importance Sampling

Kartic Subr, James Arvo

IEEE Conference on Interactive Raytracing, 2007

Paper (pdf)

Slides (pdf)

We present an algorithm for efficient stratified importance sampling of environment maps that generates samples in the positive hemi- sphere defined by local orientation of arbitrary surfaces while ac- counting for cosine weighting. The importance function is dynam- ically adjusted according to the surface normal using steerable ba- sis functions. The algorithm is easy to implement and requires no user-defined parameters. As a preprocessing step, we approximate the incident illumination from an environment map as a continuous piecewise linear function on S^2 and represent this as a triangu- lated height field. The product of this approximation and a dynami- cally orientable steering function, viz. the cosine lobe, serves as an importance sampling function. Our method allows the importance function to be sampled with an asymptotic cost of O(logn) per sam- ple where n is the number of triangles. The most novel aspect of the algorithm is its ability to dynamically compute normalization fac- tors which are integrals of the illumination over the positive hemi- spheres defined by the local surface normals during shading. The key to this feature is that the weight variation of each triangle due to the clamped cosine steering function can be well approximated by a small number of spherical harmonic coefficients which can be ac- cumulated over any collection of triangles, in any orientation, with- out introducing higher-order terms. Consequently, the weighted in- tegral of the entire steerable piecewise-linear approximation is no more costly to compute than that of a single triangle, which makes re-weighting and re-normalizing with respect to any surface ori- entation a trivial constant-time operation. The choice of spherical harmonics as the set of basis functions for our steerable importance function allows for easy rotation between coordinate systems. An- other novel element of our algorithm is an analytic parametrization for generating stratified samples with linearly-varying density over a triangular support.

Greedy Algorithm for Local Contrast Enhancement of Images

Kartic Subr, Aditi Majumder, Sandy Irani

ICIAP 2005

We present a technique that achieves local contrast enhance- ment by representing it as an optimization problem. For this, we first introduce a scalar objective function that estimates the average local contrast of the image; to achieve the contrast enhancement, we seek to maximize this objective function subject to strict constraints on the lo- cal gradients and the color range of the image. The former constraint controls the amount of contrast enhancement achieved while the latter prevents over or under saturation of the colors as a result of the en- hancement. We propose a greedy iterative algorithm, controlled by a single parameter, to solve this optimization problem. Thus, our contrast enhancement is achieved without explicitly segmenting the image either in the spatial (multi-scale) or frequency (multi-resolution) domain. We demonstrate our method on both gray and color images and compare it with other existing global and local contrast enhancement techniques.

Order Independent, Attenuation-Leakage Free Splatting using FreeVoxels

Kartic Subr, Pablo Diaz-Gutierrez, Renato Pajarola, M. Gopi

Technical Report ifi-2007.01, Department of Informatics, Unviersity of Zurich

In splatting-based volume rendering, there is a well-known problem of attenuation leakage, that occurs due to blend- ing operations on adjacent voxels. Hardware accelerated volume splatting exploits the graphics hardware’s alpha- blending capability to achieve attenuation from layers of voxels. However, this alpha-blending functionality results in accumulated errors(attenuation leakage), if performed on multiple overlapping alpha-values. In this paper, we in- troduce the concept of FreeVoxels which are self-sufficient structures in which the data required for operations on voxels are pre-computed and stored. These data are used to render each voxel independently in any order and also to eliminate the attenuation leakage. The drawback of the FreeVoxel data structure, that this paper does not address, is that it requires a significant amount of extra storage. De- spite that, the advantages of a FreeVoxel data structure war- rant extensive investigations in this direction. Specifically, FreeVoxel can be used, other than in solving the attenu- ation leakage problem, to achieve order-independent ren- dering; in parallel volume rendering, use of FreeVoxels al- lows arbitrary static data distribution with no data migra- tion; it also enables synchronization-free rendering with- out compromising load-balancing. A similar data structure with comparable memory requirements has also been used for opacity based occlusion culling in volume rendering by [10]. In this paper, we also describe a hierarchical exten- sion of FreeVoxels that lends itself to multi-resolution ren- dering.